|

After developing such a model, if additional values of the explanatory variables are collected without an accompanying response value, the fitted model can be used to make a prediction of the response.

If the goal is error reduction in prediction or forecasting, linear regression can be used to fit a predictive model to an observed data set of values of the response and explanatory variables.Most applications fall into one of the following two broad categories: Linear regression has many practical uses. This is because models which depend linearly on their unknown parameters are easier to fit than models which are non-linearly related to their parameters and because the statistical properties of the resulting estimators are easier to determine. Linear regression was the first type of regression analysis to be studied rigorously, and to be used extensively in practical applications. Like all forms of regression analysis, linear regression focuses on the conditional probability distribution of the response given the values of the predictors, rather than on the joint probability distribution of all of these variables, which is the domain of multivariate analysis. Most commonly, the conditional mean of the response given the values of the explanatory variables (or predictors) is assumed to be an affine function of those values less commonly, the conditional median or some other quantile is used. In linear regression, the relationships are modeled using linear predictor functions whose unknown model parameters are estimated from the data.

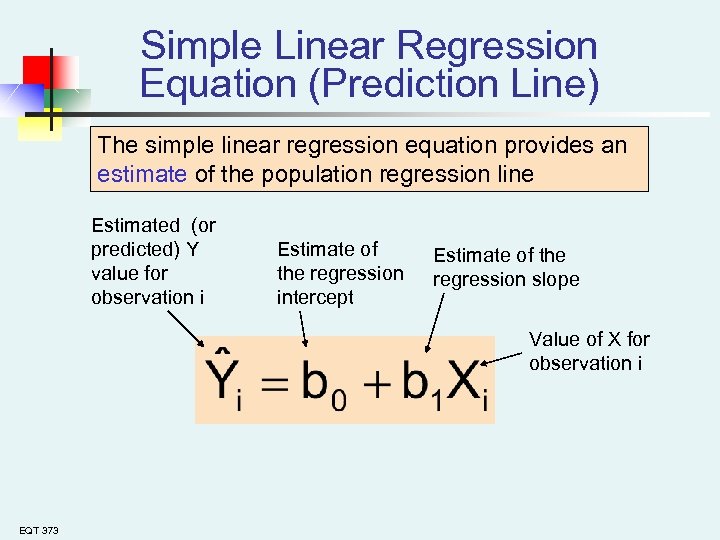

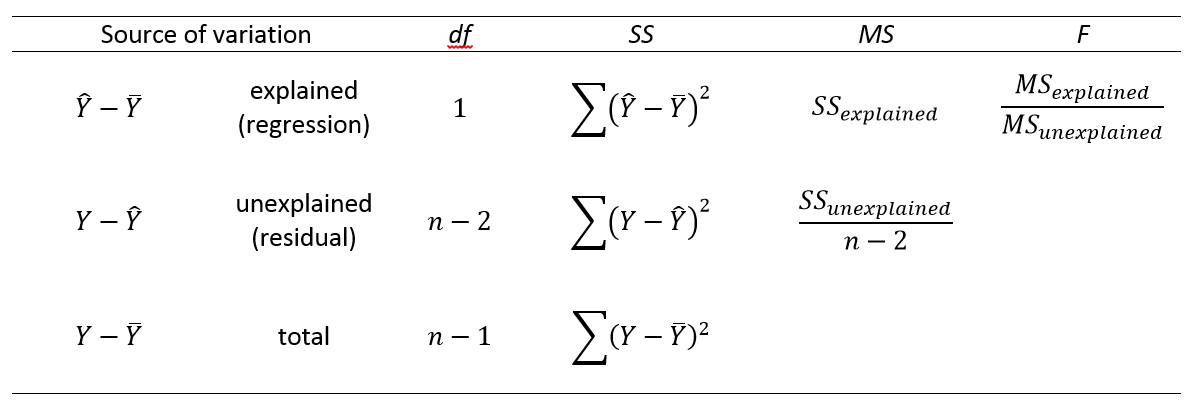

If the explanatory variables are measured with error then errors-in-variables models are required, also known as measurement error models. This term is distinct from multivariate linear regression, where multiple correlated dependent variables are predicted, rather than a single scalar variable. The case of one explanatory variable is called simple linear regression for more than one, the process is called multiple linear regression. In statistics, linear regression is a statistical model which estimates the linear relationship between a scalar response and one or more explanatory variables (also known as dependent and independent variables). The F-test can be interpreted as testing whether the increase in variance moving from the restricted model to the more general model is significant.Statistical modeling method Part of a series on

So how to interpret F-statistic in regression? If F F F is larger than its critical value, we can reject the null hypothesis. Now, we can proceed in the way we described in the previous section by finding the critical F-value ( F N − K α J ) (F^J_1 = N-K df 1 = N − K is at the side of the table (we can also say that F F F has an F-distribution with J J J and N − K N − K N − K degrees of freedom). In other words, if F F F is larger than its critical value, we can reject the null hypothesis. However, to be more precise, we need to find a critical value of the F-statistic to decide on the rejection. Naturally, the larger the F-statistic, the more evidence we have to reject the null hypothesis (note that the F-statistic increases when the difference between the two variances gets larger).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed